WHY LLM API

Scale AI smarter thanks to:

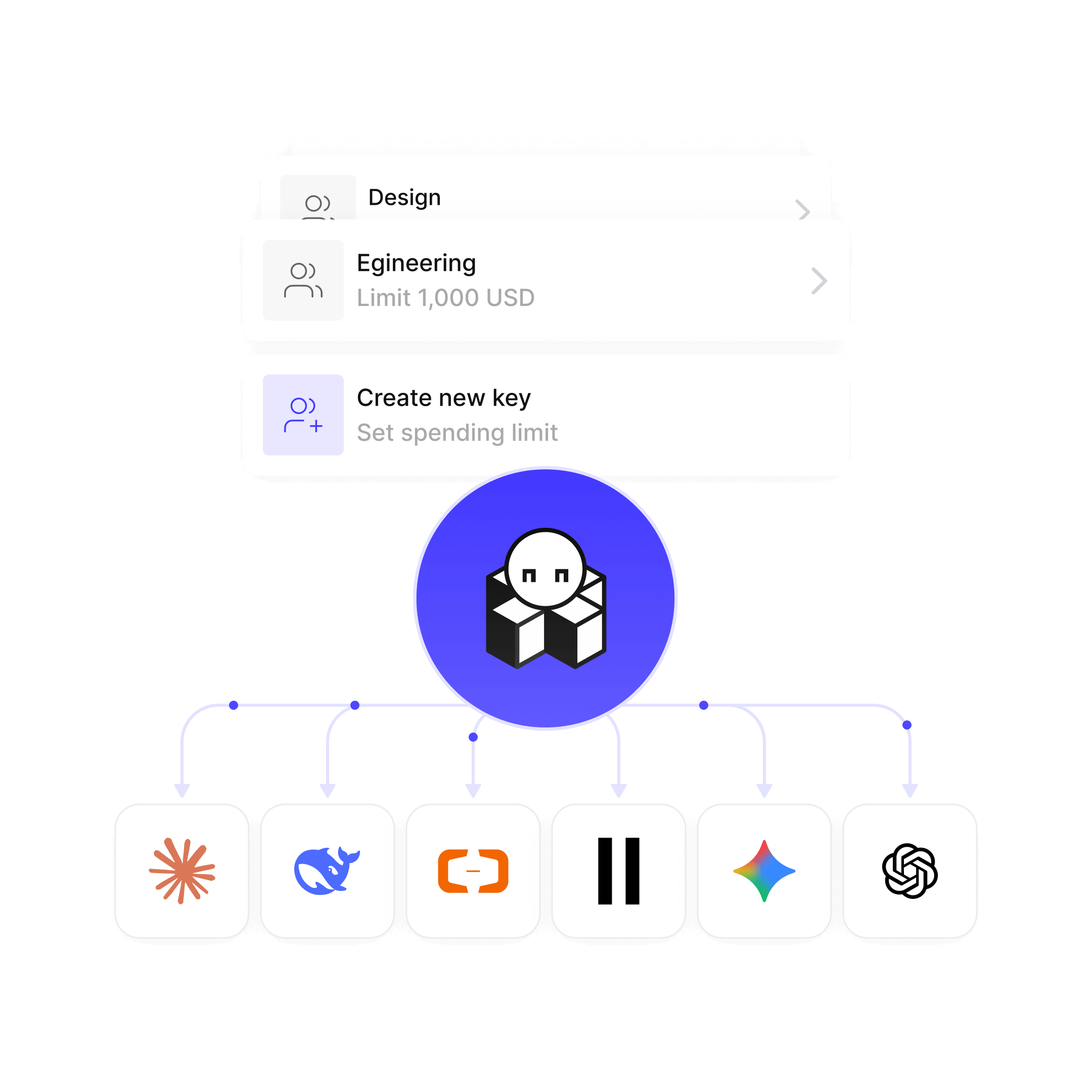

Team-level spending limits

Precise control over model access & usage

See every dollar spent, across 300+ models

All you need in one place

Budget limits

Set a spending cap per organization. Requests stop when the limit is hit — no overspend, ever.

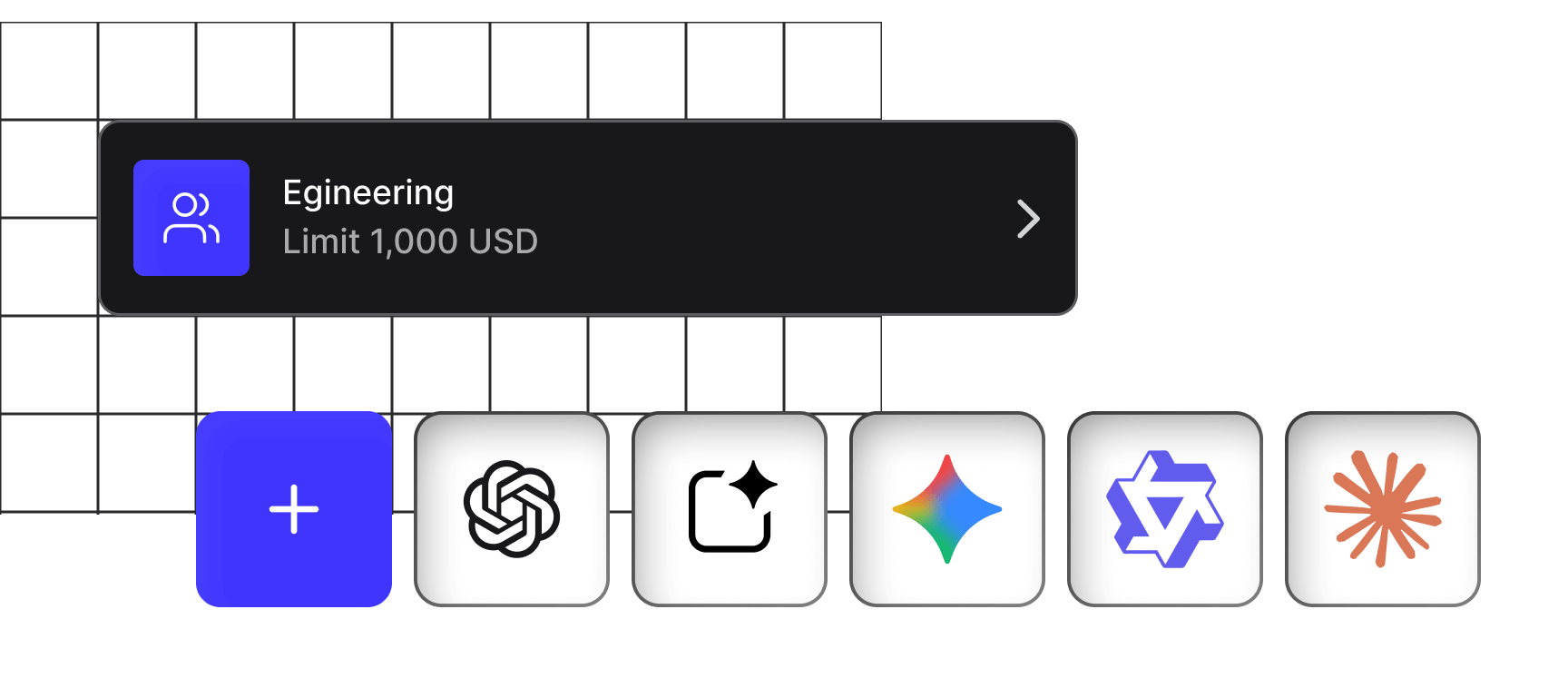

Model access controls

Whitelist or blacklist specific models per organization. Route teams to the right model for their use case

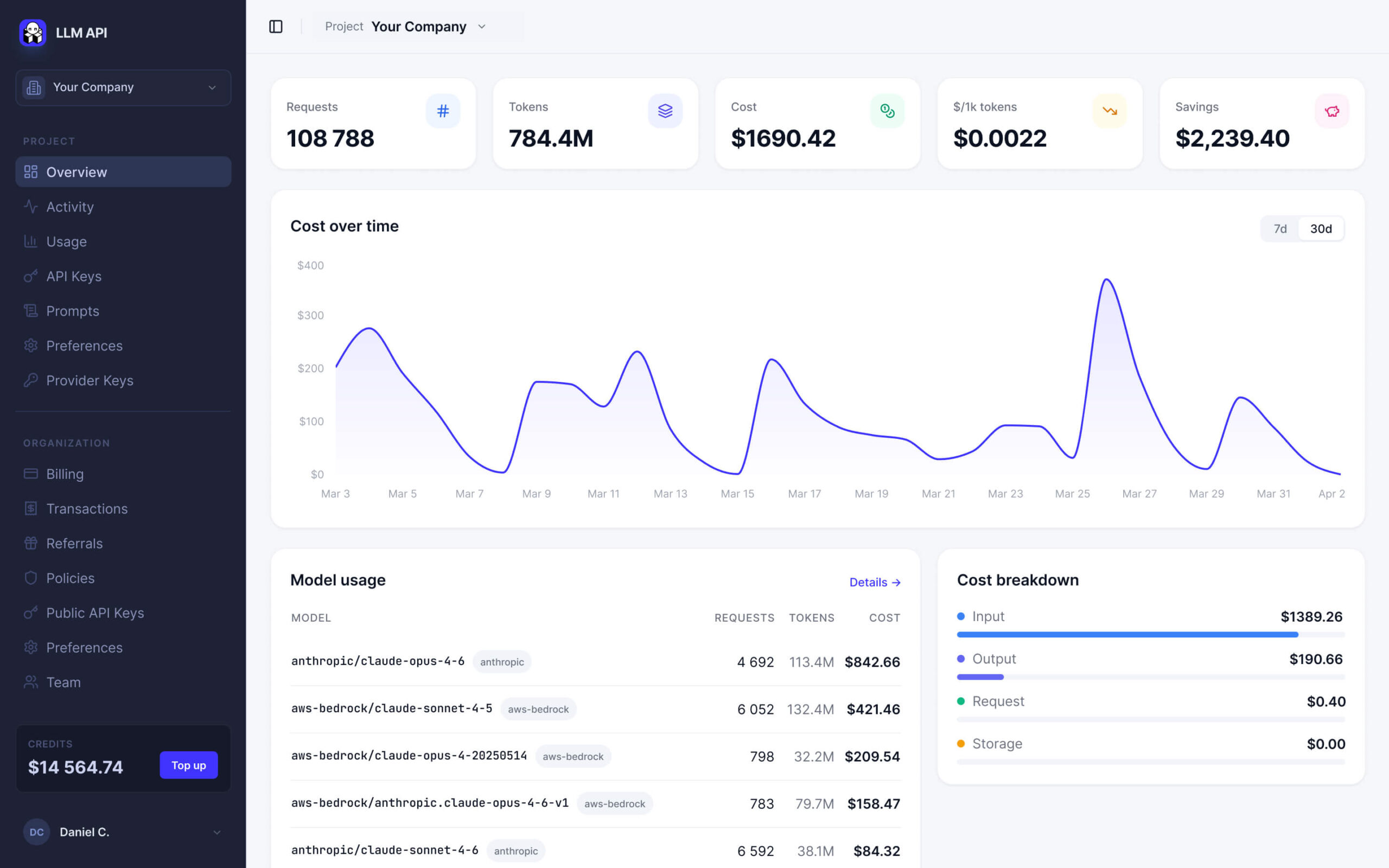

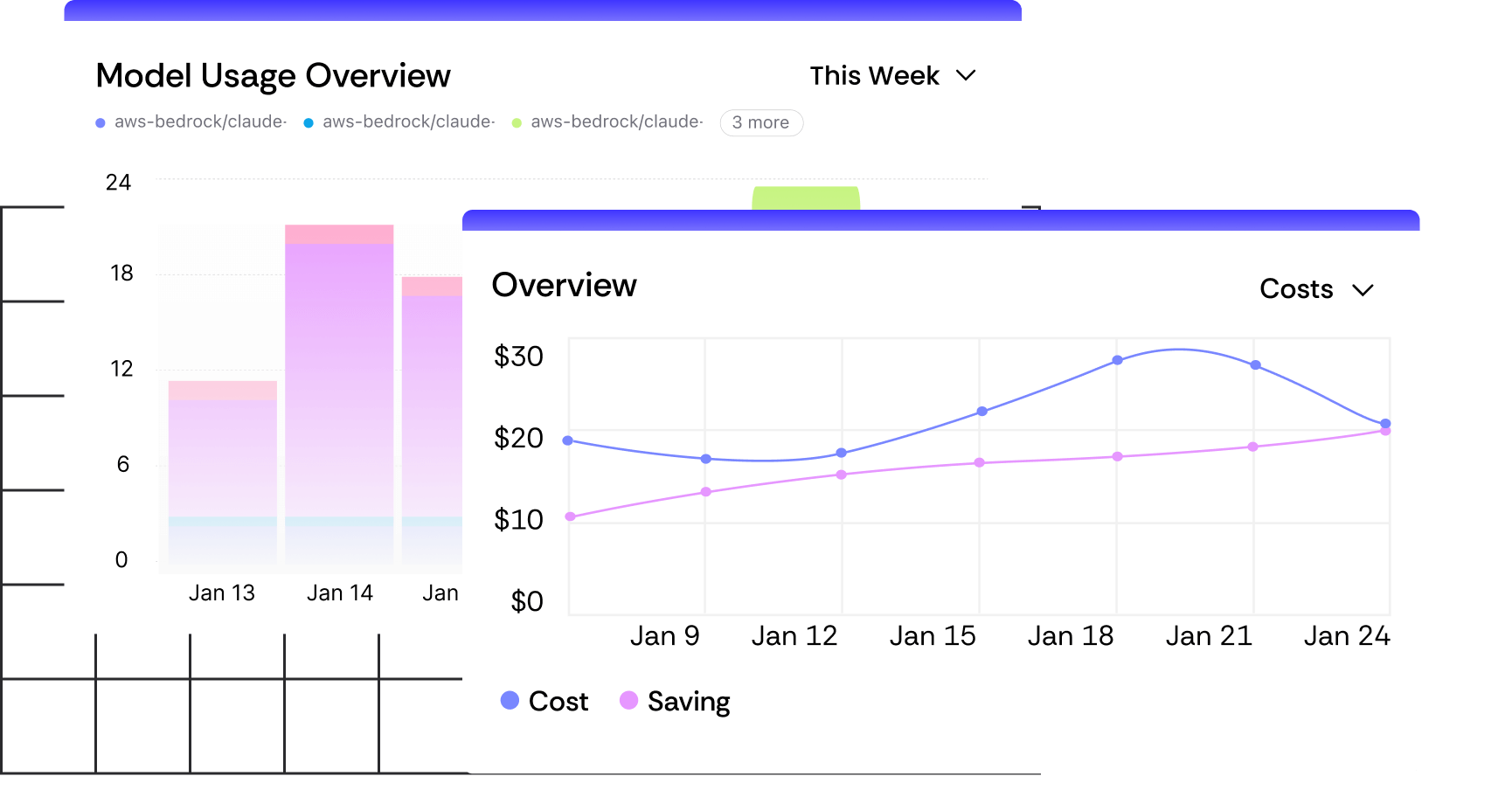

Real-time spend dashboard

Requests, tokens, inference cost, savings, avg cost per 1K tokens. Broken down by model and provider.

Automatic budget notifications

Email alerts when balance runs low or when usage jumps 20%+ week-over-week.

Unlimited organizations

Separate spend tracking per project, team, or client. Full visibility at every level.

Organization context

Add shared context to an organization so every model in that workspace produces better, more relevant results.

Setup an enterprise-ready AI infrastructure with a single line of code

Eliminated vendor lock-in

Reduced AI costs by up to 30–60%

Integration time – minimized to minutes

How it works

No hidden steps, no unnecessary details — just a smooth setup that lets you dive right in.

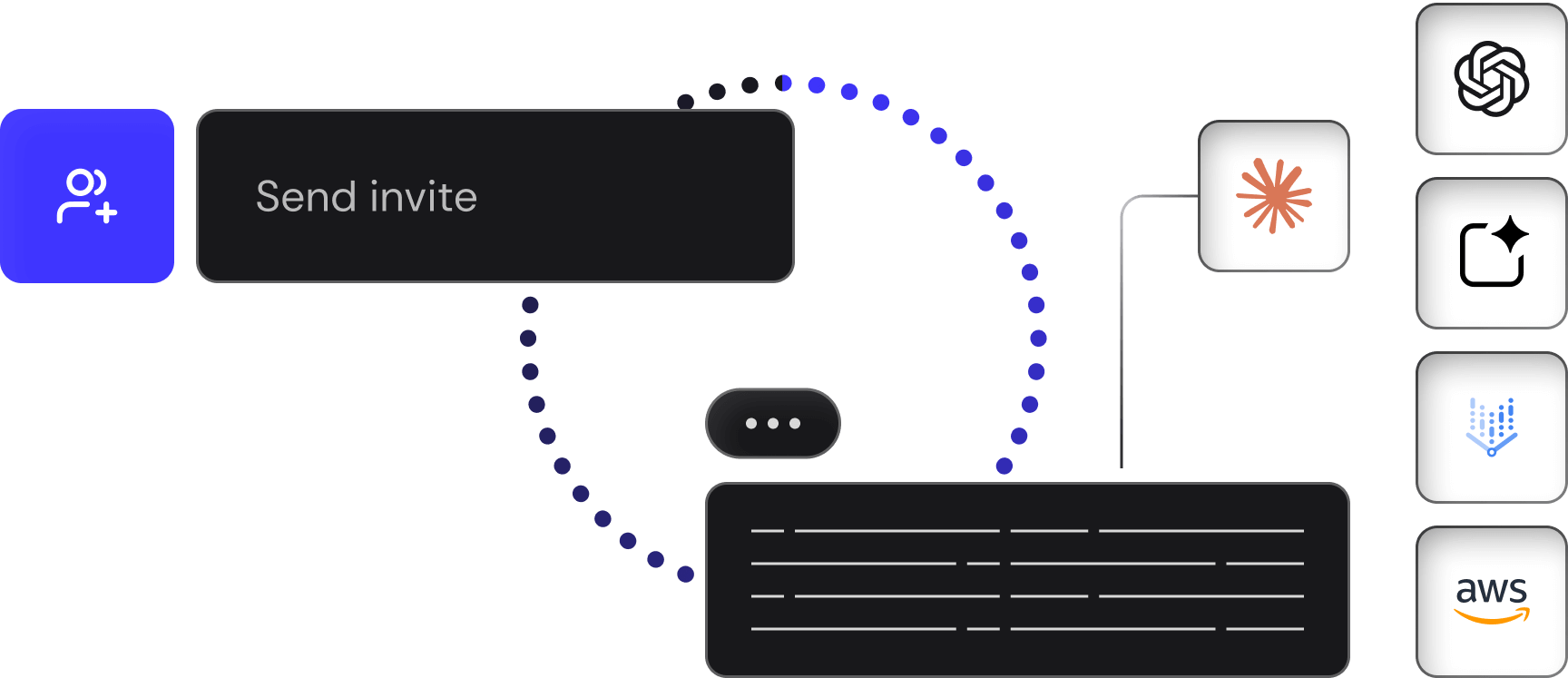

Get My API KeyGet Your Key & Invite Your Team

Replace scattered provider accounts with a single API key. Invite your entire team in a single click and assign them their own keys.

Set Budgets & Model Controls

Define spending limits per organization. Restrict which models your team can use — whitelist specific models, or block expensive ones for certain projects.

Watch The Unified Dashboard

See requests, tokens, inference cost, and savings in real time — by project and by model. When usage spikes or balance runs low, you get an email before it becomes a problem.

Get a next level of AI infrastructure with LLM API

Multiple providers

Cost optimization

Unified billing

Model comparison

Smart routing

No vendor lock-in

Built-in fallback handling

Centralized team access

One LLM API. 300+ models. Zero friction

- Unified API interface

- Multi-provider support

- Performance monitoring

- Secure key management

- One-Click team sync

- Cost-aware analytics

- Per‑model/provider breakdown

- Errors & reliability monitoring